One-Shot Learning

One of the biggest criticisms of state-of-the-art Deep Learning systems is that they require massive amounts of labeled data to work well. Humans are able to quickly learn new concepts and we want our machines to do the same. One-shot learning is the paradigm that formalizes this problem. One-Shot Learning refers to Deep Learning problems where the model is given only one instance for training data and has to learn to re-identify that instance in the testing data. A popular example of One-Shot Learning is found in facial recognition systems.

How can you recognize a face again after only having seen it once before?

Instead of having to sign in at fitness gym using a membership card with your face on it, you could have a computer vision system that recognizes your face and grants you entry to the gym. In classical Deep Learning systems, this would require many pictures of your face such that a Convolutional Network can be trained to identify you from your face. This poses additional problems such as how to react when the system sees a face it has never seen before. The solution to this is to train the network to learn a distance function between images rather than explicitly classifying them. This is the central idea behind One-Shot Learning.

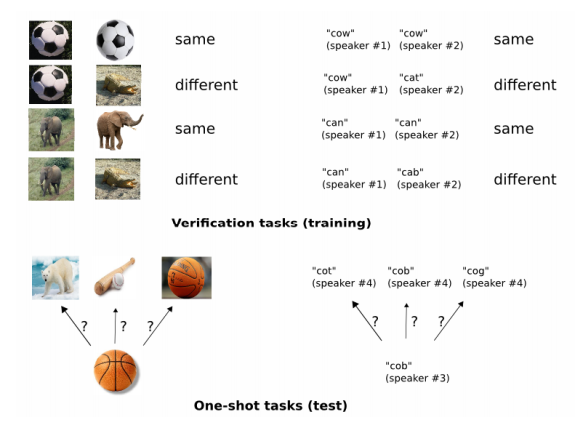

The idea is to train the model to differentiate between same and different pairs and then generalize these ideas to evaluate new categories. The role of Deep Learning in this process is to apply a series of functions that converts a high-dimensional representation such as image matrices into representations, usually much lower-dimensional, that are easily separable from each other. For example, a Convolutional Neural Network that classifies dogs from cats learns to convert an image of size say (224 x 224 x 3) into a vector representation of size (100 x 1) in which cats and dogs are easily separable. This is accomplished by optimizing the parameters of many, many functions through back-propagation and gradient descent. This idea works in any modality from images to text, speech, video, and biological data as well.

Training a Deep Neural Network to learn features useful for One-Shot learning is accomplished in two stages. First, the model is trained on the verification task. This task inputs labeled pairs of images to the model which are to be labeled as belonging to the ‘same’ or ‘different’ class. Secondly, the ‘same/different’ predictions are used in the one-shot learning setting to identify new images. This is done by taking the maximum ‘same’ probability outputted from the model after having been trained on the verification task.

Thank you for reading! Hopefully this article was a good introduction to One-Shot Learning!

Many of these ideas in this article are presented in the following research paper by Gregory Koch, Richard Zemel, and Ruslan Salakhutdinov, https://www.cs.cmu.edu/~rsalakhu/papers/oneshot1.pdf.